What Happens When Every Department Has Its Own AI Agent

In this article, we explore what really happens when every department has its own AI agent, with practical examples from across the organization.

What Happens When Every Department Has Its Own AI Agent

As AI adoption accelerates, many companies reach the same stage at roughly the same time.

Marketing has its own AI agent. Sales has another. HR adopts one. Product and engineering follow soon after.

On the surface, this looks like progress. Teams move faster, manual work disappears, and productivity jumps.

But once every department has its own AI agent, new questions start to appear:

How do these agents interact?

Who owns decisions?

What happens when agents disagree?

In this article, we explore what really happens when every department has its own AI agent, with practical examples from across the organization.

Meta Description

Discover what happens when every department uses its own AI agent, with real world examples from marketing, HR, sales, product, and engineering.

Why Departments Are Adopting AI Agents

AI agents are appealing because they mirror how humans work.

They can read information, reason about context, decide what to do, and take action. That makes them especially useful for day to day tasks that involve judgment rather than rigid rules.

As a result, departments naturally adopt agents to solve their own problems first.

Marketing: Speed, Scale, and Brand Risk

Marketing teams often adopt AI agents early.

A marketing AI agent can:

Generate campaign ideas

Draft ads, emails, and landing page copy

Analyze performance data

Personalize content at scale

The upside is speed. Campaigns that once took weeks can be launched in days.

The risk appears when the agent operates in isolation. Without shared context, it may drift from brand voice, reuse outdated messaging, or optimize for short term metrics instead of long term strategy.

Marketing agents work best when they are grounded in clear brand guidelines and connected to reliable performance data.

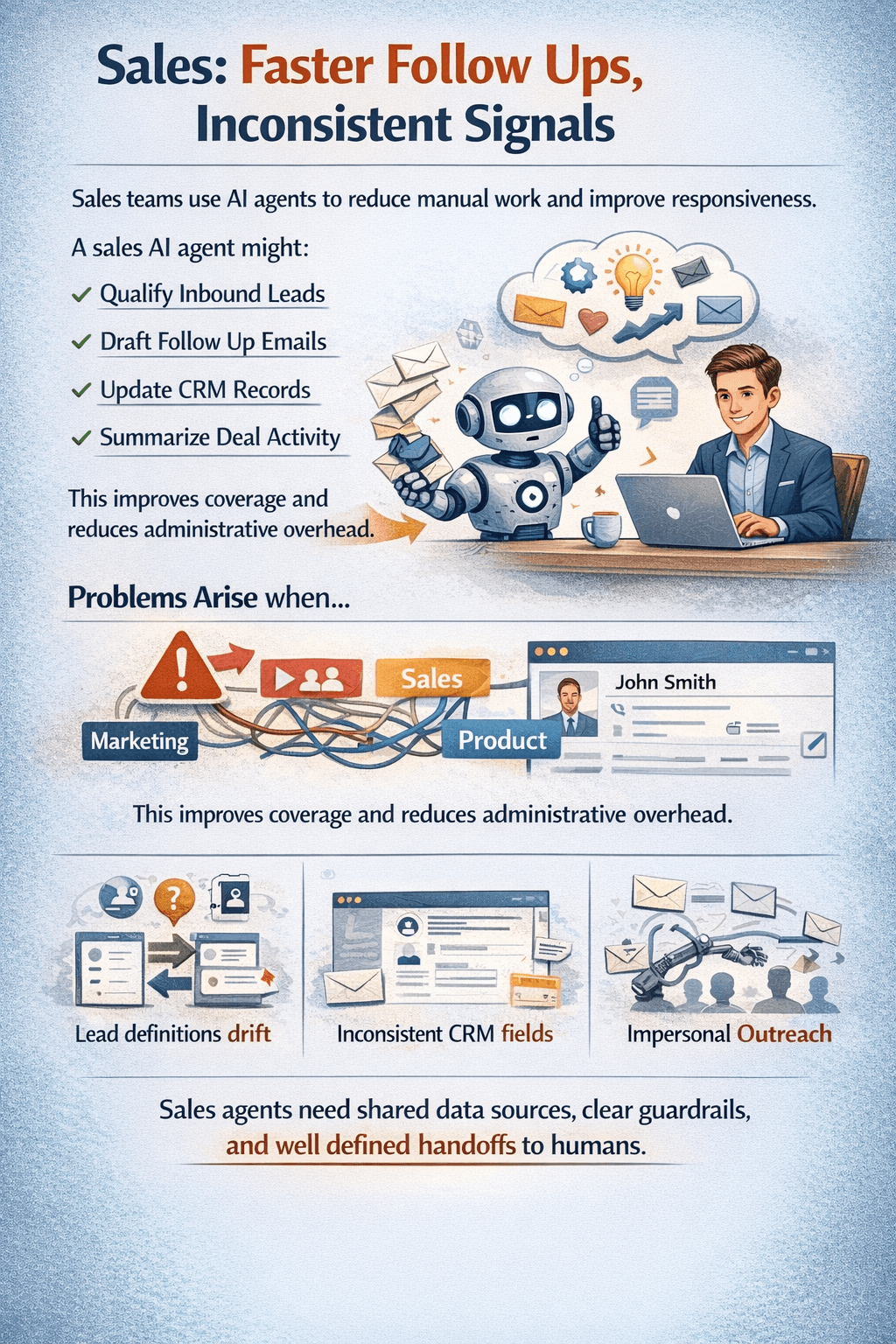

Sales: Faster Follow Ups, Inconsistent Signals

Sales teams use AI agents to reduce manual work and improve responsiveness.

A sales AI agent might:

Qualify inbound leads

Draft follow up emails

Update CRM records

Summarize deal activity

This improves coverage and reduces administrative overhead.

Problems arise when sales agents are not aligned with marketing or product data. Lead definitions can drift, CRM fields become inconsistent, and automated outreach can feel impersonal if not carefully controlled.

Sales agents need shared data sources, clear guardrails, and well defined handoffs to humans.

HR: Efficiency Meets Sensitivity

HR AI agents focus on repetitive but time consuming tasks.

Common use cases include:

Screening resumes

Scheduling interviews

Answering employee questions

Summarizing feedback and reviews

These agents free up HR teams to focus on people, not paperwork.

However, HR data is sensitive. If agents are not carefully governed, risks include biased decisions, inconsistent answers to employees, and privacy or compliance issues.

HR agents require stricter controls, transparency, and clear escalation paths.

Product: Insight Without Context Loss

Product teams use AI agents to make sense of large volumes of feedback.

A product AI agent can:

Analyze user feedback

Cluster feature requests

Summarize research notes

Monitor usage patterns

This helps teams stay closer to users.

The challenge is context. Not every request represents real demand, and not every signal aligns with company strategy. If agents operate independently, they can overweight noisy inputs.

Product agents should support human decision making, not replace it.

Engineering: Velocity and Hidden Complexity

Engineering AI agents are becoming common.

They often:

Review code

Suggest refactors

Generate tests

Assist with debugging

This can significantly increase development speed.

But without shared standards, engineering agents can introduce inconsistency. Architectural decisions may drift, technical debt can accumulate, and code quality can vary between teams.

Engineering agents work best when aligned with clear standards, strong feedback loops, and human ownership.

The Hidden Problem: AI Silos

When every department has its own AI agent, a new kind of silo emerges.

Each agent optimizes for local goals, not company wide outcomes.

Without coordination:

Data becomes inconsistent

Decisions conflict

Accountability becomes unclear

This is not a technology problem. It is a system design problem.

The Right Way to Scale Departmental AI Agents

The solution is not fewer agents, but better structure.

Successful organizations:

Share data across agents

Define clear responsibilities

Add governance and oversight

Combine agents with workflows and human review

When designed as part of a connected system, AI agents complement each other instead of competing.

Final Thoughts

AI agents can transform every department, but only when they are treated as part of a larger system.

The future of enterprise AI is not one super agent.

It is many focused agents, working together, with humans still in control.

That is how AI scales inside real businesses.