What do the terms AI, Machine Learning (ML), Deep Learning (DL) mean?

If you read this article from start to finish, you will not need another blog post to understand AI terminology. This will permanently clear the confusion around terms like AI, Machine Learning, Deep Learning. You will finally know what is the real definition of ChatGPT :)

Artificial Intelligence has become one of those terms that everyone hears, but few people feel confident explaining. We use it daily, on our phones, at work, while shopping online, yet the words around it often sound too technical and confusing.

If you’ve ever wondered what the real difference is between AI, Machine Learning, Deep Learning, and tools like ChatGPT, you’re not alone.

Let us break down from the ground up, assuming you have no prior knowledge :)

What AI means (nothing fancy)

Artificial Intelligence (AI) = a computer that behaves like it’s intelligent

AI is not a specific technology.

It’s a concept.

That’s it.

If a machine: makes decisions, solves problems or acts “smart”, we call it AI.

Example

A chess-playing computer

Google Maps choosing routes

Early examples of AI didn’t involve learning at all. Chess programs, calculators, and rule-based systems were considered AI because they performed tasks that normally required human thinking.

The important thing to understand is this: AI describes what a system does, not how it does it.

⚠️ AI does NOT say how the machine works.

It only describes what it looks like from the outside.

Actually, at first, humans tried:

“Let’s write rules for everything.”

Example in chess: IF opponent moves pawn → respond with knight

Problem here was that such rule based AI would require too many rules, break easily and humans can’t think of every case, really

So people needed a better way.

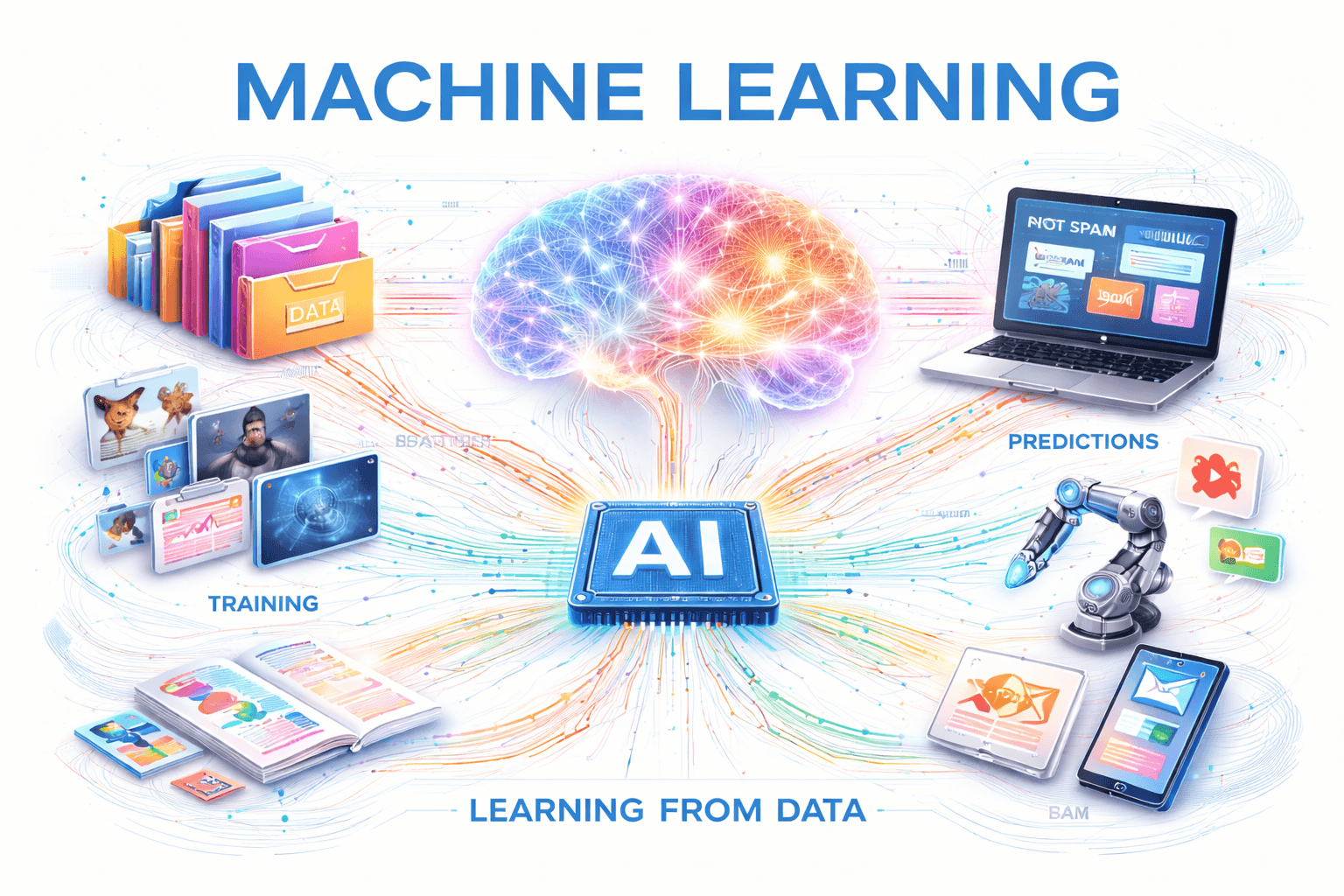

Machine Learning (ML)

For a long time, AI systems worked by following rules written by humans like in the chess example above.

It works well for simple problems, but it quickly falls apart in real life.

There are too many edge cases, too many variations, and too many situations humans can’t anticipate.

This led to a new idea:

instead of telling computers exactly what to do, what if we let them learn from examples?

That idea is called Machine Learning.

Machine Learning is a way of building AI systems by feeding them data and letting them discover patterns on their own.

For example, rather than defining what spam email looks like, we show the system 10.000 emails labeled “spam” or “not spam,” and it learns the difference by itself.

Machine Learning isn’t a product you use it’s a training method.

It’s one of the main ways modern AI systems are created.

ML exists because writing rules for everything is impossible…

So instead of creating millions of rules for computer to follow, the computer learns from examples we feed it with.

so, ML is not a product.

It is a training method.

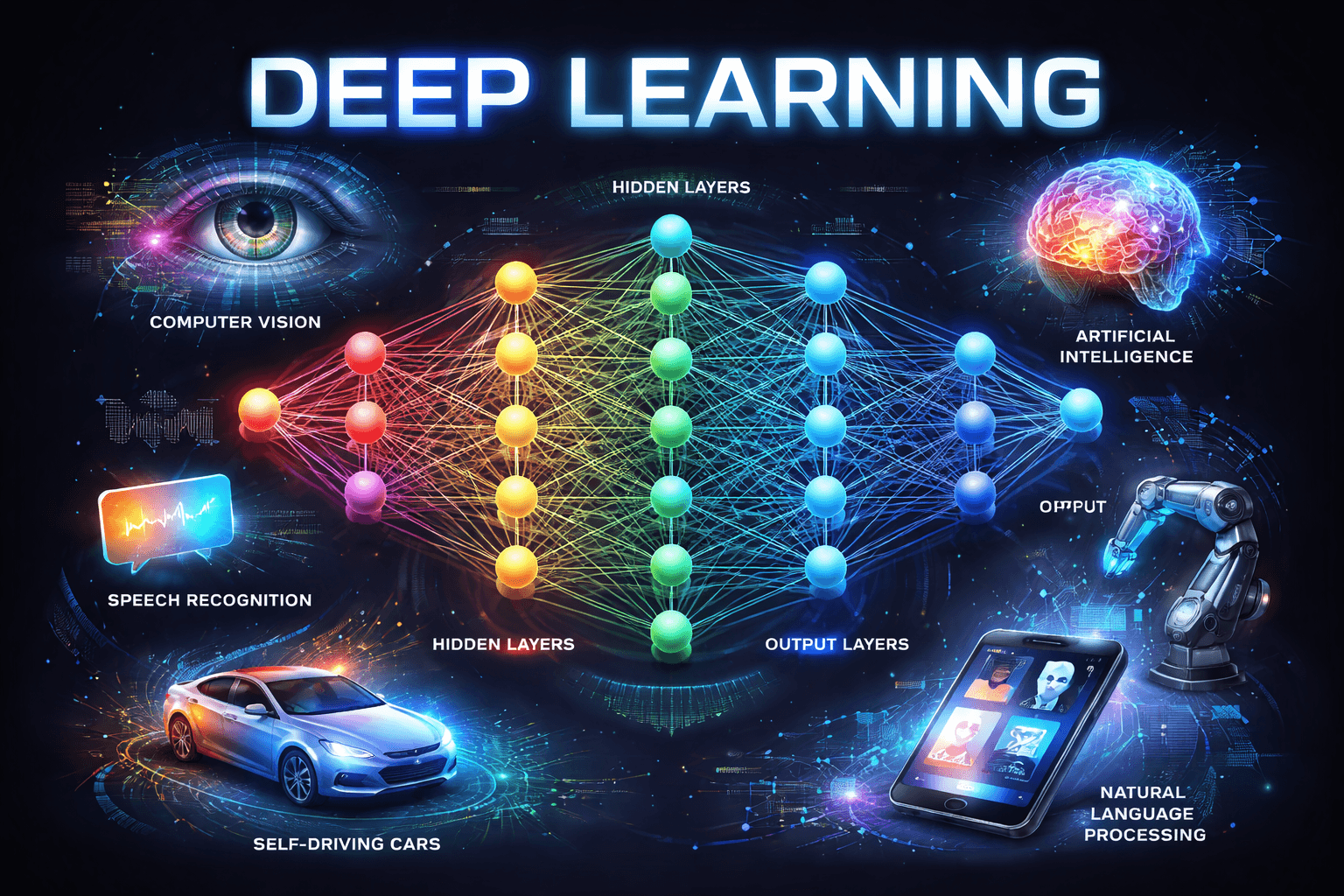

Deep Learning (DL)

Now, traditional Machine Learning works well for many tasks, but it struggles with things humans find easy, like recognizing faces, understanding speech or interpreting images.

To solve this, researchers developed Deep Learning, a more powerful form of Machine Learning inspired by how the human brain works.

What is a neural network in easy-to-undestand "bro" language

Deep Learning (DL) = A powerful type of ML inspired by the human brain

Examples of DL are face unlock on phones, speech recognition, self-driving cars etc.

To understand Deep Learning, you first need to understand neural networks, because Deep Learning is built on top of them.

A neural network is a way of teaching a computer by breaking a big problem into many very small decisions. It’s loosely inspired by how the human brain works, but you don’t need to think about biology to understand the idea.

Imagine you’re trying to recognize a handwritten number. You don’t process the whole image at once. Instead, your brain notices simple things first, lines, curves, and shapes and then combines them into something meaningful.

Neural networks work in a similar way.

A neural network is made up of many small units called neurons.

Each neuron looks at a tiny piece of the input and answers a very simple question, like “Is there a line here?” or “Does this look like a curve?”

On their own, these neurons are not intelligent. The intelligence comes from how they are connected and how their outputs are combined. (very vague, I know, but enough to understand the concept)

The network learns by adjusting these connections based on examples. When it gets an answer wrong, it slightly changes how strongly neurons influence each other. Over time, after seeing enough data, the network becomes good at recognizing patterns.

Now, Deep Learning is not a different idea from how neural networks of humans' brains function.

What makes Deep Learning “deep” is the number of layers in these networks :)

For example DL is recognizing faces like this:

lower layers detect edges and shadows,

middle layers recognize facial features like eyes or mouths,

higher layers identify an entire face.

So, DL uses neural networks with many layers, allowing systems to learn complex patterns in data.

This is what made breakthroughs like facial recognition, voice assistants, and real-time translation possible.

Deep Learning isn’t a replacement for Machine Learning, it’s a subset of it, designed for harder problems. DL literally just expanded ML.

Why DL mattered so much?

Once neural networks became deep, computers suddenly became good at things they were historically terrible at: vision, speech, and language. This shift is what led to modern breakthroughs like voice assistants, facial recognition, and language models such as ChatGPT.

In other words, Deep Learning is the reason modern AI feels so human-like.

Generative AI

Shortly put: from analyzing to creating :)

For a long time, AI systems focused on recognizing, predicting, and classifying. Then came a major shift.

Instead of just analyzing existing data, AI systems began creating new content.

This is known as Generative AI.

These systems can generate text, images, music, code, and more.

They don’t retrieve pre-written answers, they generate original outputs based on what they’ve learned.

This is the category that sparked the current wave of public excitement around AI.

Large Language Models (LLMs)

Again shortly put: AI that works with language. Your favorite ChatGPT, Claude, and Gemini.

Within Generative AI, Large Language Models focus specifically on text.

LLMs are trained on massive amounts of written language.

They learn grammar, context, and meaning by predicting the next word in a sentence, repeatedly, at enormous scale.

They can write articles, summarize documents, answer questions, and help with programming not because they understand language like humans do, but because they’ve learned its patterns extremely well.

Chatbots

One final piece often gets mixed in with everything else: chatbots.

A chatbot is simply the interface that allows humans to interact with an AI system through conversation.

It’s not the intelligence itself.

The screen that you see when you open ChatGPT is a chatbot

What is ChatGPT?

ChatGPT is a chatbot, meaning it’s the conversational interface you interact with, but the intelligence behind it comes from something deeper.

Under the hood, ChatGPT is powered by a LLM a type of AI model trained using deep learning techniques on massive amounts of text so it can understand and generate human-like language.

So, ChatGPT is not “the AI itself,” but a product built on top of an LLM.

Large Language Models are just one category of modern AI models, others include computer vision models (for images and video), speech and audio models (for voice and sound), recommendation and prediction models (used in search, ads, and personalization), and reinforcement learning models (used in robotics and game-playing systems).

Putting it all together

AI is the umbrella term we use when something feels intelligent.

Machine Learning and Deep Learning describe how that intelligence is created (feeding the models with a lot of patterned data).

Generative AI explains what the system can do, create new content.

Large Language Models specialize in text.

Chatbots are how we talk to all of it.

When people casually say “AI,” they’re not wrong, they’re just speaking at a higher level. We humans are following mental shortcuts but intrinsically all of us are "a little lazy".

We do this all the time.

We say “car” instead of listing the engine, transmission, electronics, and software working together.

We say “the internet” without thinking about cables, servers, protocols, and data centers spread across the world.

In the same way, “AI” has become a shorthand for an entire stack of technologies.

Most people aren’t talking about algorithms, training methods, or neural networks, they’re talking about the experience. If something talks, recommends, recognizes, or decides, we call it AI.

Over time, the umbrella term sticks, while the technical details fade into the background.